It’s time for the sequel to my May 2024 newsletter issue where I described how Microsoft’s products contain features that are effectively dead. They are not formally deprecated, yet any customer would be wise to not accidentally touch these things. Because getting bitten by a zombie feature may turn your business application into a system that starts infecting the systems around it.

These days, it’s very fashionable to write “X is dead!” type of posts on social media. Nowhere is this more pronounced than in the field of AI frontier labs, with Anthropic’s and OpenAI’s announcements on new capabilities instantly tanking the stock value of incumbents in software or professional services.

These new features from AI companies, or companies aspiring to become one, are more like fairies. Whereas zombies have once been real, living people, many of the products built on top of large language models are creatures that have never been part of our world. You have to stretch your imagination and share the vision of AI CEOs for you to be able to see these fairies and the magical powers they possess.

Why Microsoft is the king of dead features

A few days ago, I watched a fun rant from ThePrimeagen (Michael Paulson) about "The Copilot Problem". In the video, he showed how Gemini agreed with him that Microsoft has the worst product naming conventions in tech. Yeah, can't blame Gemini. But what do LLM chatbots in general think about this? I decided to find out, by performing a highly scientific experiment of giving the same prompt to six AI chats from different vendors. Below are the results:

Every single one agreed on the top names. It's either Microsoft or Google. Only Copilot and Claude voted for Google as the worst offender. ChatGPT, Gemini, Deepseek, Meta AI all said “c’mon, you know it’s Microsoft, right?😉”. In this case, it’s easy for me to agree with the wisdom of the language model. 4 vs. 2 plus my personal opinion make this a clear victory for Microsoft.

When showing the results to Claude, it had a great insight about the results. The strategies of Microsoft and Google in how they handle failure are very different: Google kills their products and launches new ones. Microsoft "reimagines" the products and keeps the same name around. Instead of the harsh yet determined approach demonstrated by the growing list on Killed by Google, Microsoft tries to distort reality by changing the meaning of a brand or feature name.

Not only does this make the product offering cryptic and confusing. It also encourages the pattern where features and services are launched but never properly shut down. This means that the many lists of deprecated features are merely the tip of the iceberg. Many more services are stuck in a permanent holding pattern where Microsoft will deliver zero improvements or new capabilities. They remain technically available yet customers should actively be looking for an alternative way to build their systems to avoid any dependencies.

Because why would you want to rely on some technology that has been developed 20 years ago and not improved since? This would be particularly insane in the world of cloud services where customers are paying a monthly fee for the access to an “evergreen platform”. The key reason you’d commit to a contract and pay continuously for the software instead of doing a one-off development project and building bespoke software is because the SaaS vendor should keep continuously investing in it. Once evidence shows that is not happening anymore, it’s time to re-evaluate what tools you’re using.

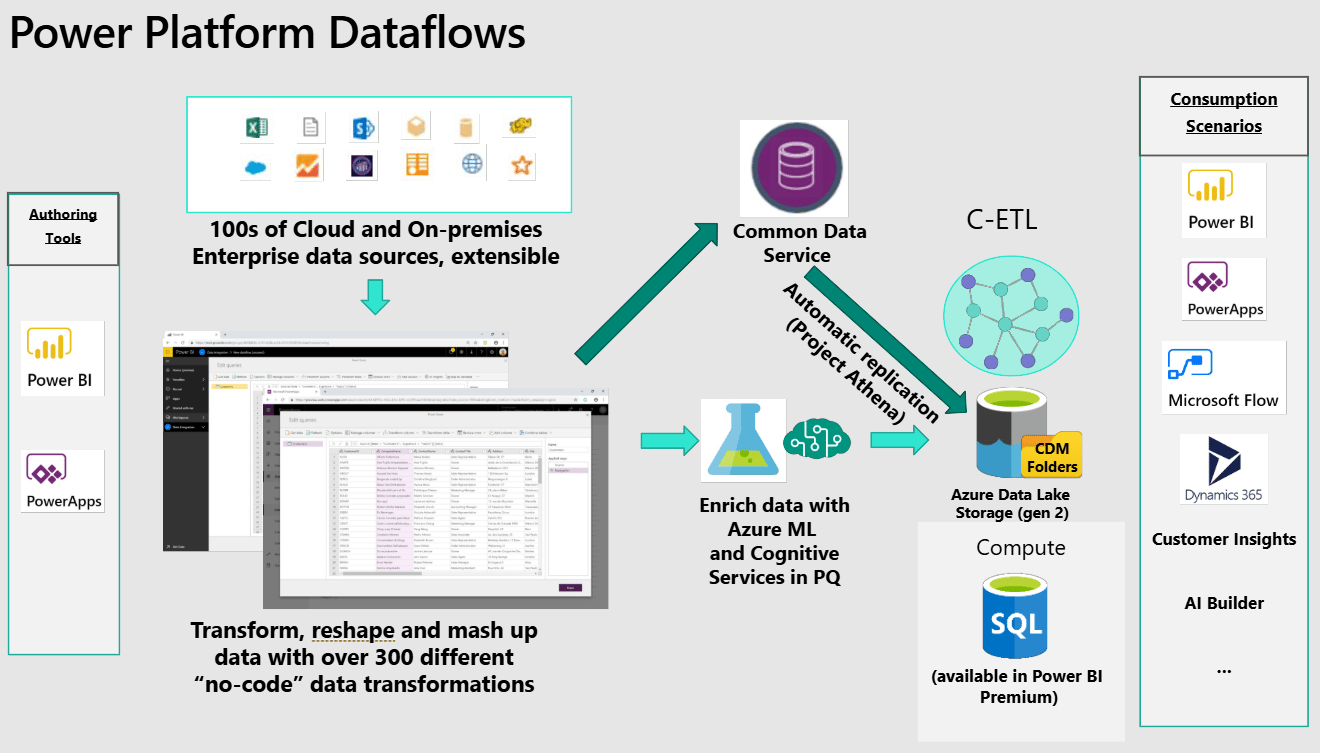

Power Platform Dataflows: the CDM relic

Whereas the Common Data Model itself turned out to be a nothingburger with little substance beyond the Ignite 2018 keynote slides, this era did deliver some tangible tools to work with data in the low-code platform of Microsoft. As long as you ignored the lack of anything “Common” that would unite SaaS tools across Microsoft, SAP and Adobe (as promised by the CEOs on stage). Dataflows are one real service from that era.

Dataflows are a great example of the product naming practices of Microsoft, given how there are two different products called by that name. There are Standard Dataflows that load data into a Dataverse table, and nothing more. Then, there’s the Analytical Dataflows for getting data into a format that Power BI or other analytics tools in the MS stack can work with it. People would commonly refer to these as Power Platform Dataflows and Power BI Dataflows. The latter are probably used 50x more often in the real world.

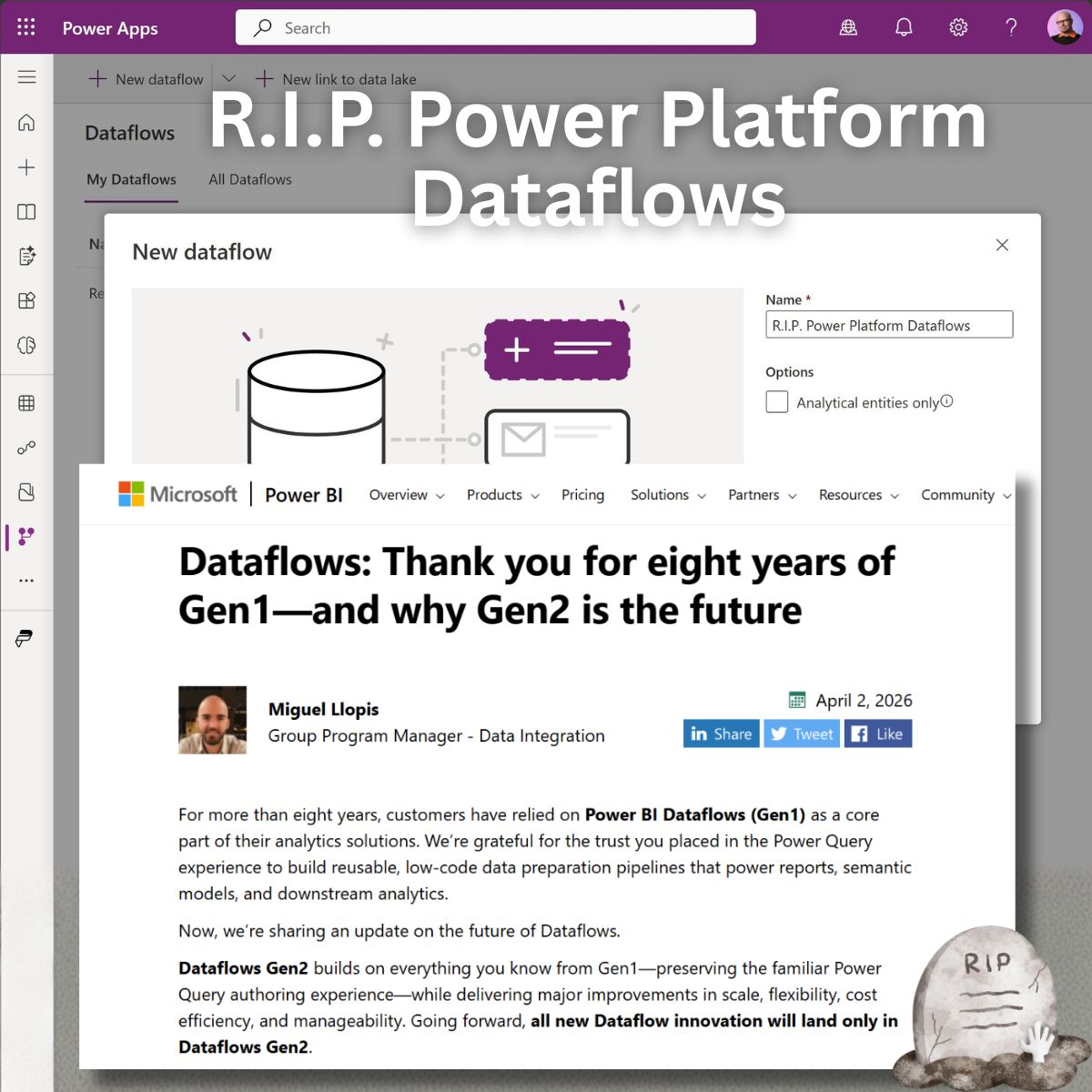

That is why the recent announcement on the Power BI product team blog caused some turmoil. The title was: “Dataflows: Thank you for eight years of Gen1—and why Gen2 is the future”. To understand the real impact, however, we need to look beyond the product marketing language and explore the dependencies between different services.

I don’t spend too much time on the MS data platform side in my daily work. But whenever I do, I try to focus on reading real-life experiences from users and consultants. In this case, the Reddit thread about this Dataflows Gen1 legacy status reveals the overall sentiment very quickly. With 200+ comments, most people seem to agree that Microsoft is pushing smaller customers into paying for Fabric capacity and dealing with more complex technology with seemingly little to no practical benefits.

This is perfectly in line with what has been going on for a while now. As the market for self-service BI in the original Power BI fashion now seems to be saturated (and Microsoft won that round), the growth must come from selling new, bigger things. I wrote about how the SMB customers of Power BI are getting left behind by Microsoft’s Fabric push in an earlier issue:

Now, why did I declare “R.I.P. Power Platform Dataflows” in my social post shown above? Did I not read the blog post in detail, to see that it references Power BI Dataflows? I did indeed, but in this business you won’t survive as a solution architect unless you learn to read between the lines of what Microsoft says.

The back story is that the Power Platform Dataflows were effectively abandoned years ago. They have been a dead feature for quite some time. Now, with their parent product, Power BI Dataflows, being officially placed into Legacy status and stamped with “no new features — ever”, it’s time to stop pretending otherwise. If Microsoft isn’t providing even Power BI users new functionalities unless they sign up for additional Fabric capacity in addition to their existing PBI licenses, why on earth would anything new land on the Dataverse side either?

The state of Dataflows in Power Platform

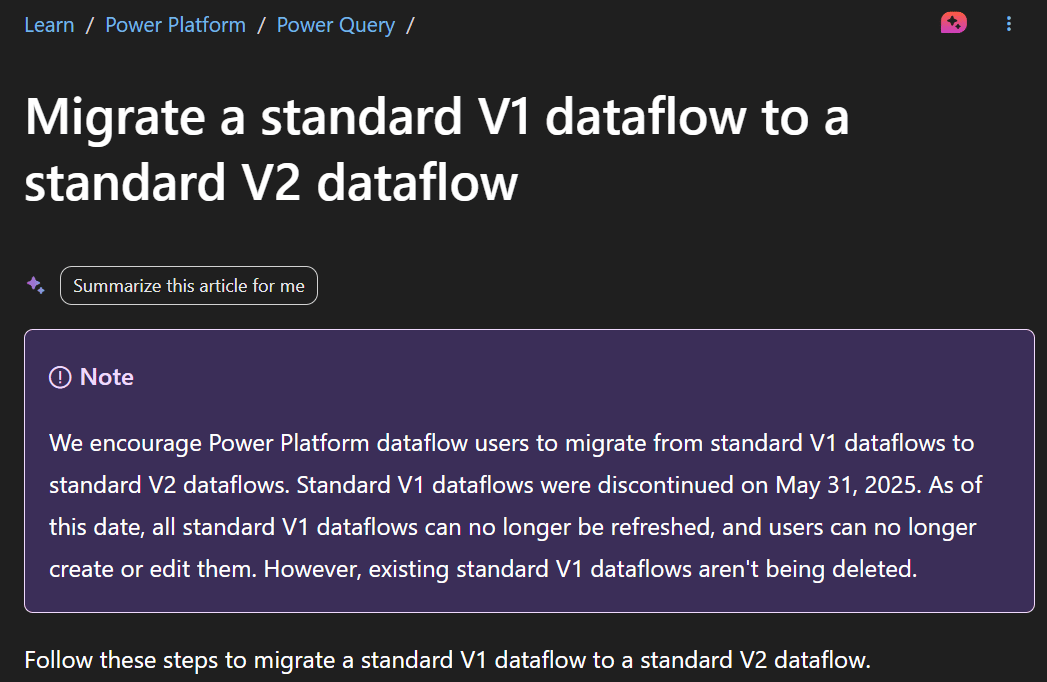

A couple of community members asked me if I wasn’t aware that Power Platform Dataflows had already been migrated to V2. I am very much aware, and I also understand how every single character in Microsoft’s materials can carry a specific meaning. In this case, the Dataflows Gen1 and Gen2 have nothing to do with this Standard Dataflows V1 to V2 migration that took place earlier.

V2 Dataflows were introduced around 2021 — two years before Microsoft Fabric was announced. The last meaningful improvements to Power Platform Dataflows must have been at around 2022. Which would be cool if the product itself was already mature enough. But it’s not cool, because Dataflows are nowhere near “enterprise ready”. Here’s a rant I posted on LinkedIn about Dataflows in May 2022:

Dataflows - the least governable service in #PowerPlatform?

Considering how easy it is to create a standard Dataflow to schedule the retrieval of external data and push it into Dataverse, it is truly frightening how few admin capabilities exist for the feature. In fact, you can see all them in the image below.

You can open an environment, go to "All Dataflows" view and then search by owner name - assuming that you know that there is something to search for.

How could you as an admin learn that there in fact are any Dataflows running in the environment? The only way seems to be to open the default solution and check if any components of type Dataflow exist in there. If they do, then you'll need to make note of the owner's name and go back to the "All Dataflows" view.

How do you find out what the Dataflows are actually doing? You don't - unless you are ready to forcefully change the ownership of the Dataflow to your account. There appears to be no way to read what the Dataflow does, or even see the run log to check if/when it is running and what errors it might be generating.

Only the Maker can tell what's happening to the Dataflow. What if they are not currently / any longer available? Good luck.

The fact that there are no admin APIs / PowerShell cmdlets to query for Dataflows in the tenant / inside environments means that these will all fly under the radar of Power Platform admins. You won't see them in CoE Starter Kit reports, nor anywhere else. You won't know they exist, until the impact of these Dataflows forces you to investigate.

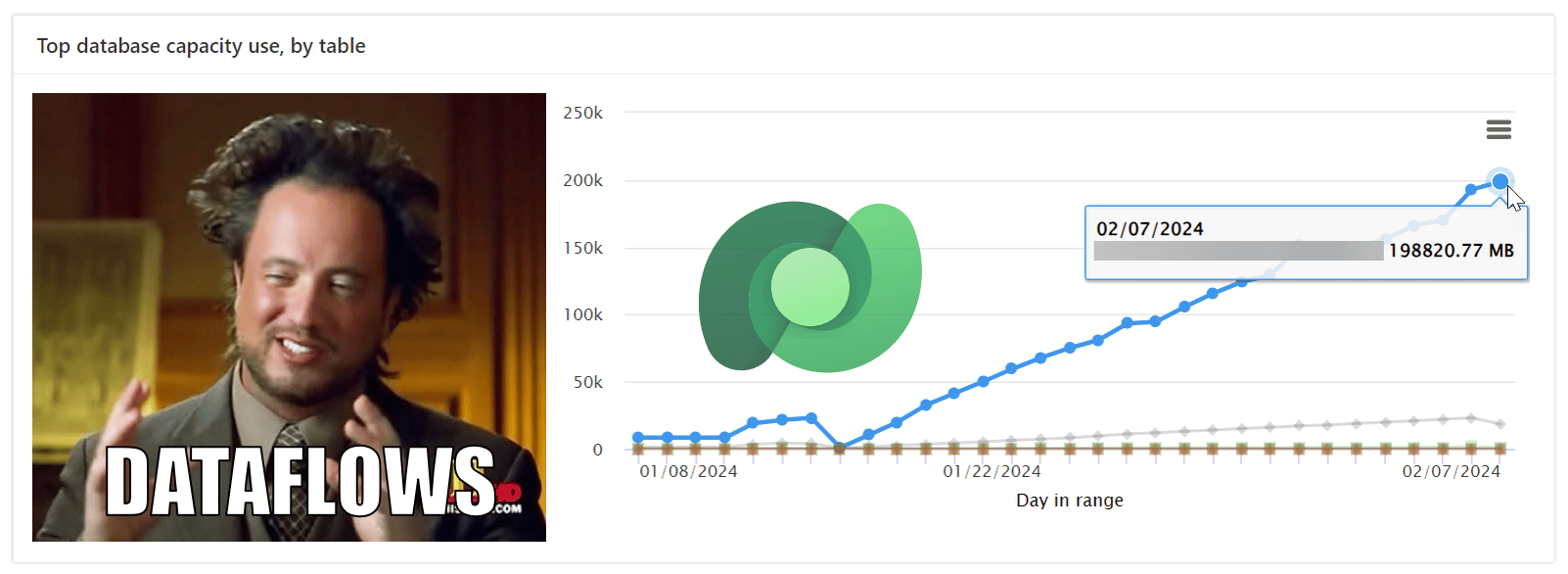

What if the Dataflows are syncing data from your ERP system into your Dataverse environment? What if there are now millions and millions of rows consuming capacity as a result of some poor choices in the solution architecture?

Can you create guardrails to say from which data sources your Makers are allowed to pull data into Dataverse? I don't think so. At least the Power Platform DLP policies aren't going to affect these Dataflows, since they are running on the Power Query connector architecture rather than the Power Apps / Automate connectors that the Power Platform environment specific DLP policies are controlling.

It really looks like there's only a single admin feature for standard Dataflows: "change owner". All the governance fundamentals around that are missing.

And that was just the governance part. Development is equally horrendous since the ALM story for Dataflows is unlike most other solution components today. Taking a look at the known limitations of Dataflows in solutions, we learn that:

Connection references aren’t supported.

Environment variables aren’t supported.

Component dependencies aren’t identified in solutions.

Application users (service principals) cannot deploy Dataflows.

Incremental refresh configuration isn’t supported when deploying solutions.

Linked tables between Dataflows aren’t supported.

Blocking unmanaged customizations isn’t supported.

The list makes it pretty clear that none of the platform side investments from past five years have been implemented for Dataflows. Because obviously this technology has never been a part of the actual low-code platform we call Power Platform. It’s from “the other side”, meaning the data platform folks. When James Phillips, the guy who launched Power BI in a big way, was still leading the BizApps unit at Microsoft, there must have been enough alignment to keep the data and app product teams talking to one another. As he left in April 2022 and went to Google, there was nothing keeping the different teams aligned.

Which gets us back to the product management strategy. If Dataflows were Google technology, we would have seen three separate products launched and killed by now. But they are Microsoft technology, which means we keep having Dataflows in the discussion — even if the underlying stack has changed and one thing called Dataflow is not compatible with another thing called Dataflow. Just another Tuesday in Redmond.

Built for builders. Not buzzwords. San José 2026

500+ speakers. 18 content tracks. Workshops, masterclasses, and the people actually shipping the tools you use every day. WeAreDevelopers World Congress — September 23–25. Use code GITPUSH26 for 10% off.

Life after death

You might be rightfully asking “so what” at this point. While it ain’t fun that the service you’re using doesn’t have a roadmap, in everyday life we have to choose our battles. If you already have a bunch of Dataflows pumping data into your Dataverse environments, is it necessary to start planning an alternative right away?

Let’s make this clear: Microsoft is not saying they will take Power Platform Dataflows away. If anything, they’re pretty solid at keeping the lights on when it comes to services that business users rely on. Some may choose Microsoft precisely because they’ve been burned by sudden shutdown announcements from the likes of Google, let alone smaller SaaS players out there. It’s the same reason as why you may choose a Toyota over a BMW: there’s less surprises ahead and the TCO is likely to be much better with Microsoft — even if you may not experience the same thrill while driving down the highway.

Here’s what the product team had to say in the comments for the “Gen1 bye-bye” post:

“Dataflows in the Power Platform address a very specific use case: Ingesting data into Dataverse for further usage within the Power Platform.

There are no implications/impact on Power Platform Dataflows on anything that we have covered in this blog.

Notably, Dataflow Gen2 does not currently support ingesting data into Dataverse, but this is something that we're considering for the future based on customer feedback.”

If you were thinkin about exploring the Dataflow Gen 2 in Fabric as an option for Dataverse imports, you can stop pursuing that idea any further. It’s a different world and a different organization today, compared to 2019 when this slide of the original Power Platform Dataflows was presented:

Intro slide to Power Platform Dataflows from a 2019 bootcamp presentation.

Being a low-code developer by heart, I actually was excited back then about what an automatable data import mechanism built right into the platform could deliver. For the citizen developer audience that couldn’t realistically get the necessary pro-dev resources for a “real” integration between business systems, Dataflows were a very attractive alternative. Mainly because you could build and operate it inside the Power Apps maker portal, with no Azure services and access needed.

Switching on my low-code governance evangelist hat, there were plenty of reason for concern about how Dataflows might be used. The LinkedIn post quoted above highlights what could go wrong. I’ve seen exactly these things go wrong since then in live

customer tenants. Dataflows getting left outside planned tenant migration inventory due to lack of any API support for cataloging them. Runaway Dataflows filling up the entire tenant Dataverse capacity with a surprise 200 GB database storage consumption - requiring ~2 weeks to even remove with regular bulk delete. And so on.

In 2026, the most pressing question that every tool like this should be able to answer is: why wouldn’t the customer just vibe code it with AI instead? For the sweet spot of where Power Platform Dataflows have been used, i.e. situations where “proper” tools haven’t been available, this is an existential question.

Clunky GUIs for mapping table columns, doing basic field type transformations, and then repeating actions across dev-test-prod via the GUI whenever changes are made — that’s not how teams will want to work in 2026. If there was proper support for programmatic creation and manipulation of Dataflow artifacts via tools like PAC CLI, it might be fine to keep using them in existing solutions. But there isn’t. It has been an awful experience whenever I’ve tried to get GitHub Copilot to help maintain or extend existing Dataflows. Much worse than Power Automate cloud flows, which at least play well with the fundamental platform concepts and can be managed via AI coding agents.

Thinking about a typical scenario where I’ve seen Power Platform Dataflows used, it would be a piece of cake for something like Claude Code to look at the existing configuration, and then rebuild it in Python, for example. Assuming that LLMs won’t go away, these custom code solutions would likely be easier to maintain than something built on

Microsoft’s dead features that represent proprietary config files and hard limits on cloud service access.

The only real question is: “Where do we run this Python script in production?” Your friendly AI chatbot will suggest plenty of options when prompted. Now, it is of course a bit problematic if everyone’s chatbot gives a different answer to this question and the code + data ends up going through various clouds the IT has no information about. Oh if only there was some common low-code platform available to these tech-savvy business users to build their apps…

And that’s really the core issue here. Power Platform pretends to be exactly that solution for addressing shadow IT concerns, yet in the case of Dataflows it fails to deliver. Only the maker-facing tooling was ever built. None of the admin capabilities or ALM support was ever delivered. If Microsoft had properly invested in making Dataflows work like native solution components, it could have stood on top of all the security, governance and development layers that Microsoft’s other teams have created to make Power Platform a solid foundation for business apps and automations. Dataflows Gen1 could have kept serving most customer needs for Dataverse imports just fine for years.

But it didn’t happen — and now it never will. Another dead feature bites the dust.